Accomplishments

- Pose Estimation Testing

- 360 -> 3D Tool Matrix

- 360-to-3D Reconstruction Architecture

- GMAT Study Guide

Architecture. “The art and technique of designing and building”

(Link)

Constructing something big often begins with outlining and constructing small atomic steps. To start baking a cake, first you crack an egg. I began testing options to estimate camera positions from 360 photos and have since refined the steps related to their 3D reconstruction. I studied and reviewed dozens of tools and libraries related to this process and compiled a matrix of related details. I also outlined my goals and steps towards them for taking the GMAT assessment tests.

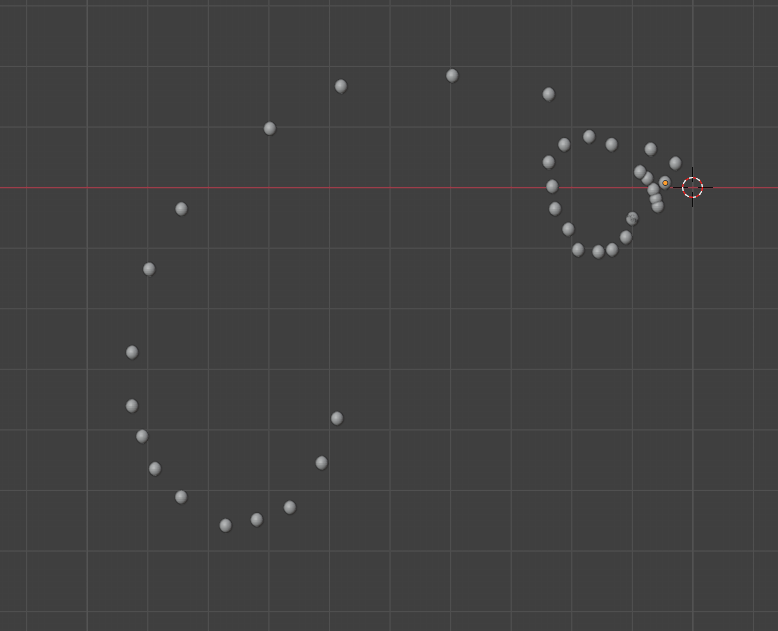

Pose Estimation Testing

Determining where each photo was taken is a foundational step for beginning 3D reconstruction. While this is trivial in many cases, efficient, affordable, and quality 3D environment reconstruction comes with constraints that make it much more challenging. I tested a variety of commercial solutions and available libraries to better understand the process and understand how my requirements affect it.

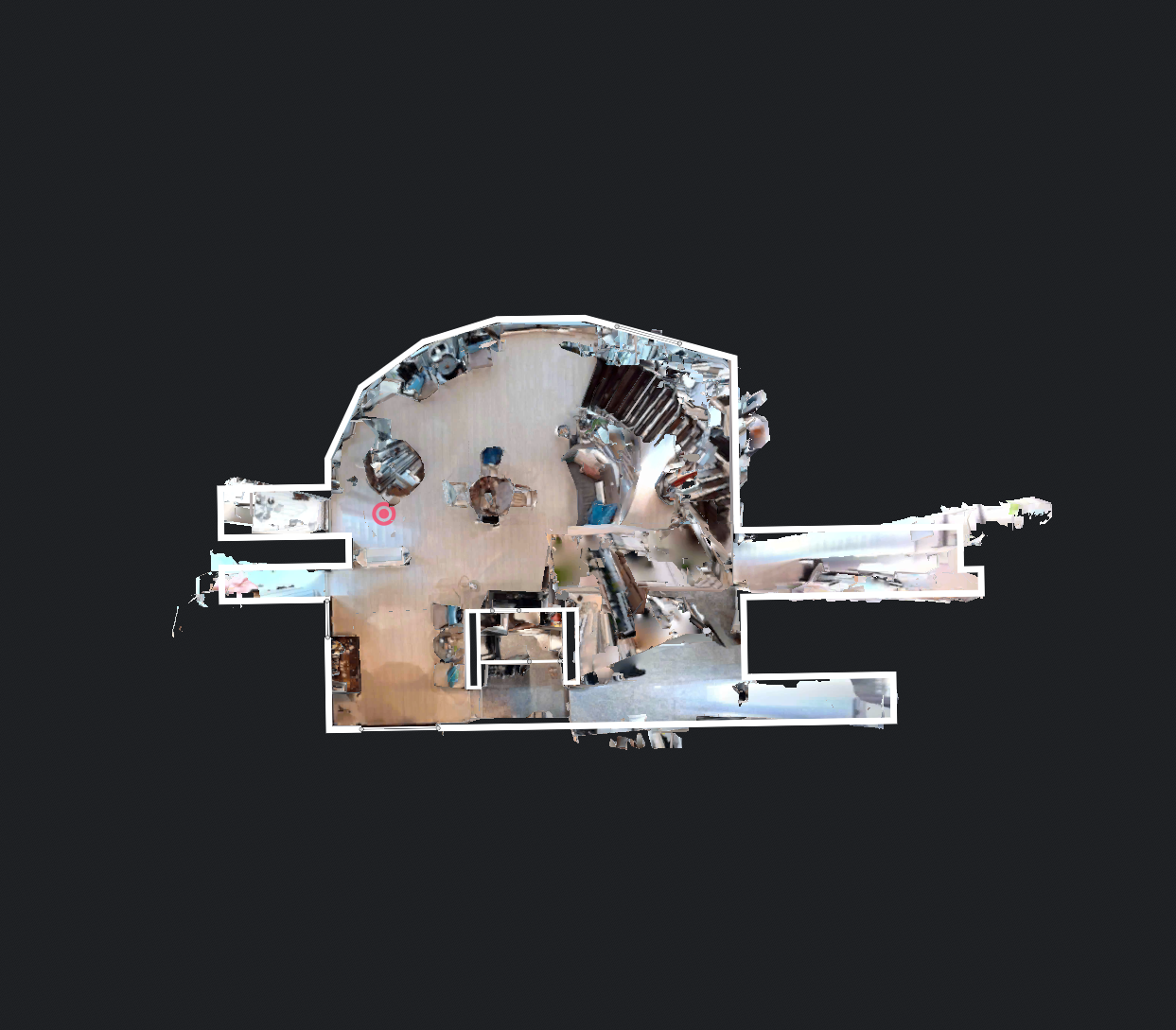

Commercial tools exist that perform the structure from motion (SfM) step to determine the camera’s positions as part of a greater process. Similar datasets were provided to Matterport and Realsee AI. Matterport was able to take the sparse camera data and combine them, while Realsee AI seemed to fail at aligning most of the cameras. Matterport bundles the camera positions as an export with the completed mesh at a cost. This cost may be fair and affordable for many projects; However, it raises the barrier to entry and puts environmental preservation and immersive experiences out of reach for many places.

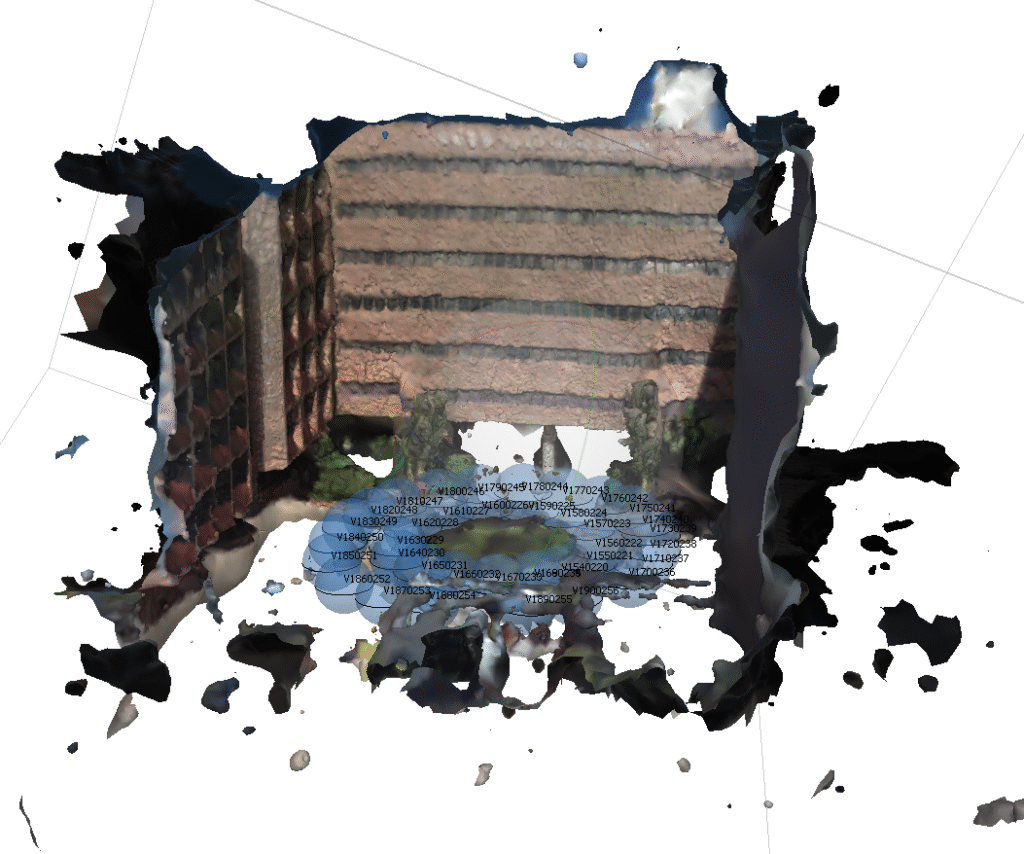

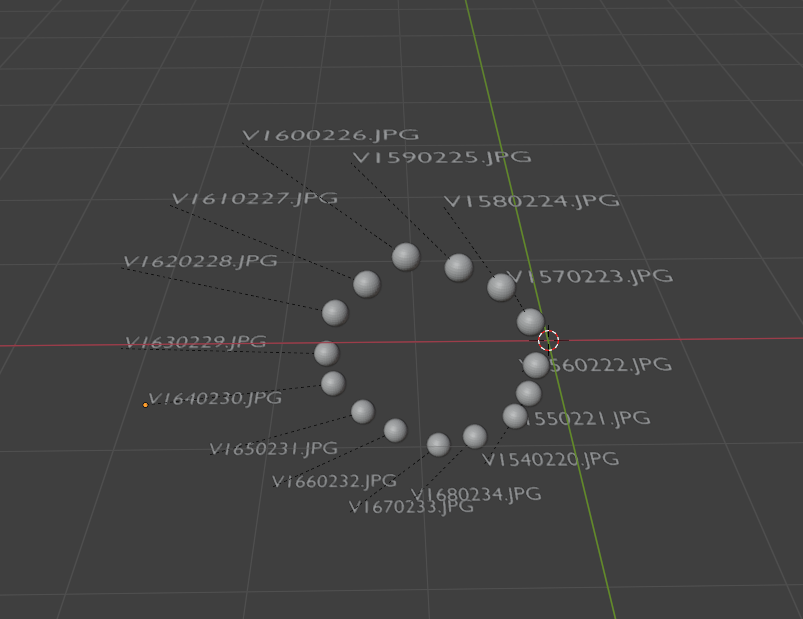

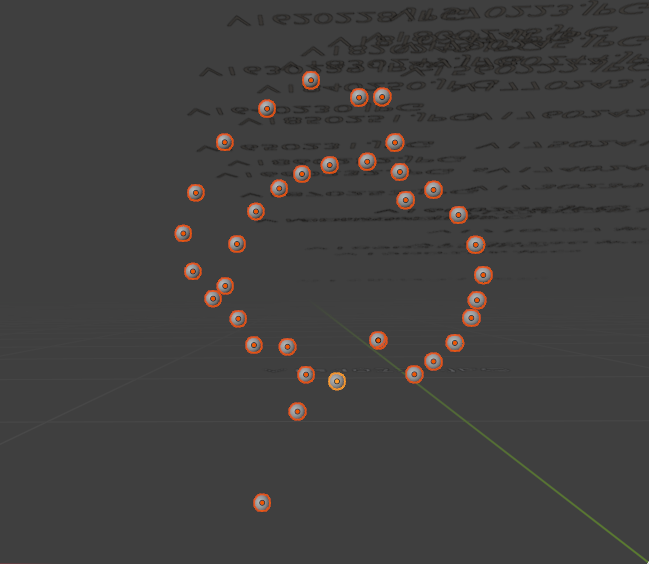

RealityScan and Metashape are commercial tools with much different associated costs. In 1 year, RealityScan will cost at most what it costs to deliver 21 scans with Matterport. Metashape is a one-time purchase and is more affordable then Matterport after 59 reconstructions. RealityScan does not yet natively support 360 photos and failed to correctly position most images. Metashape did a much better job with some test data. Every camera was correctly aligned. I tried to see if this would also lead to a usable mesh, however the result was left with many holes. Further, with the sparse test data of Supalai Place, it failed to position more than 50% of the cameras.

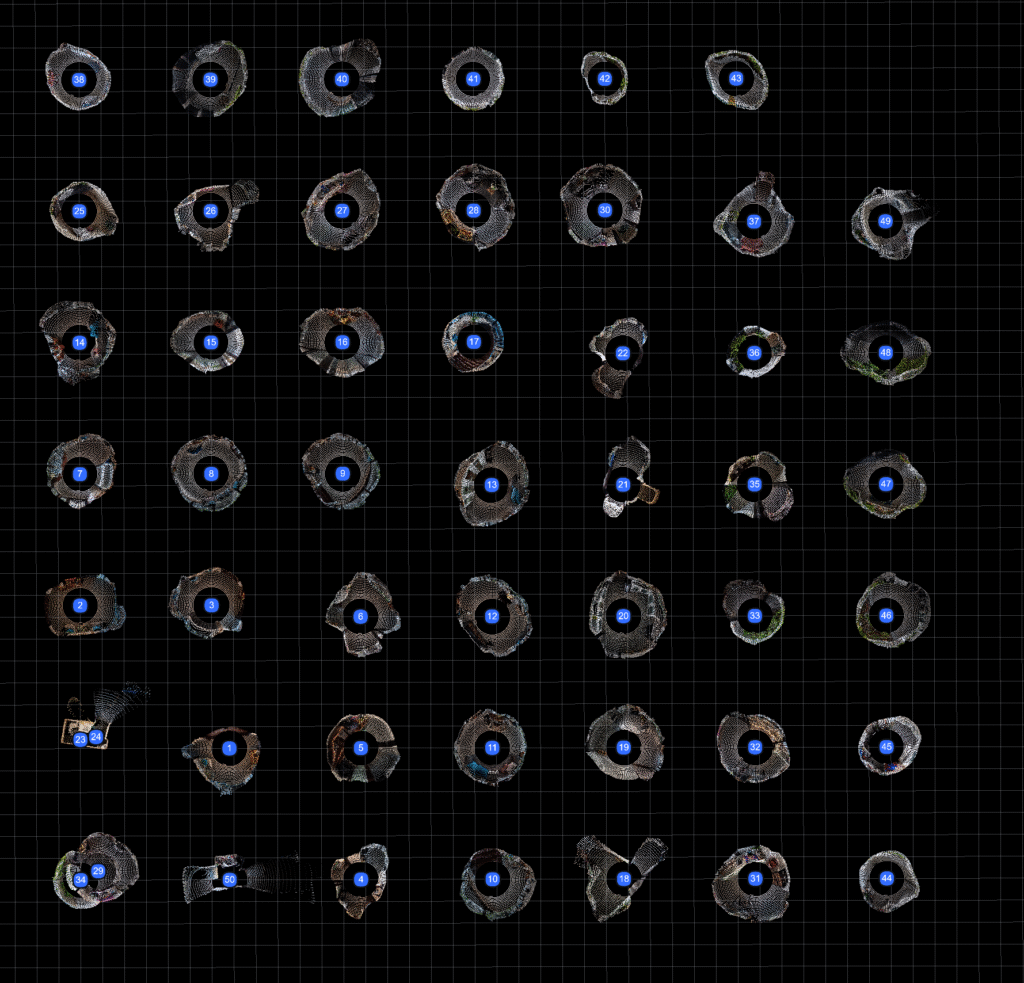

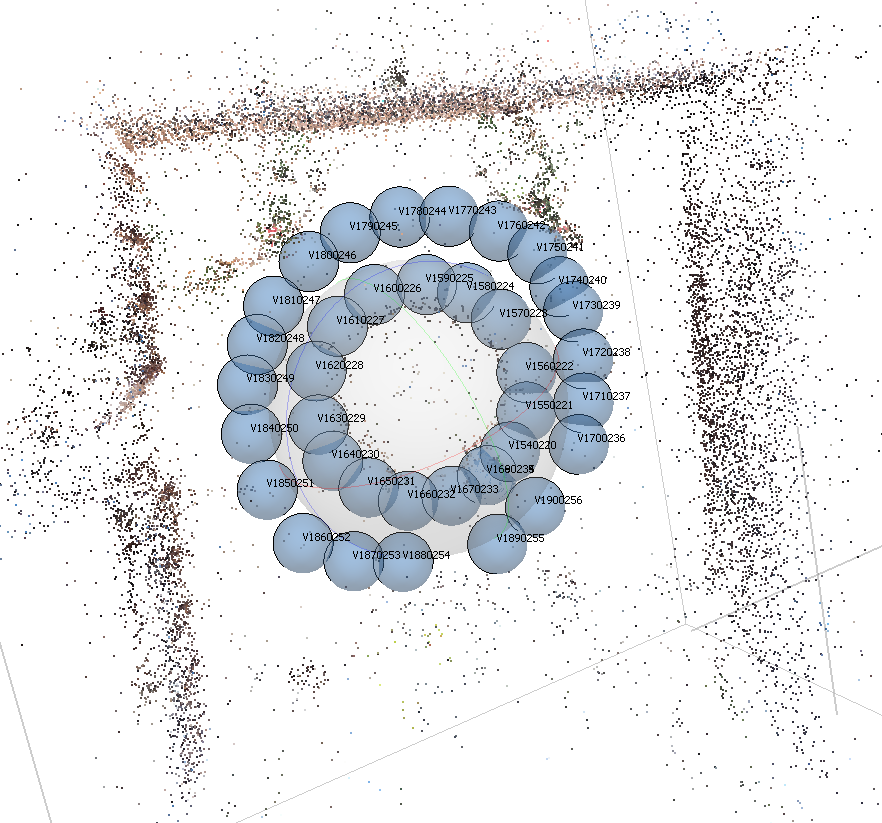

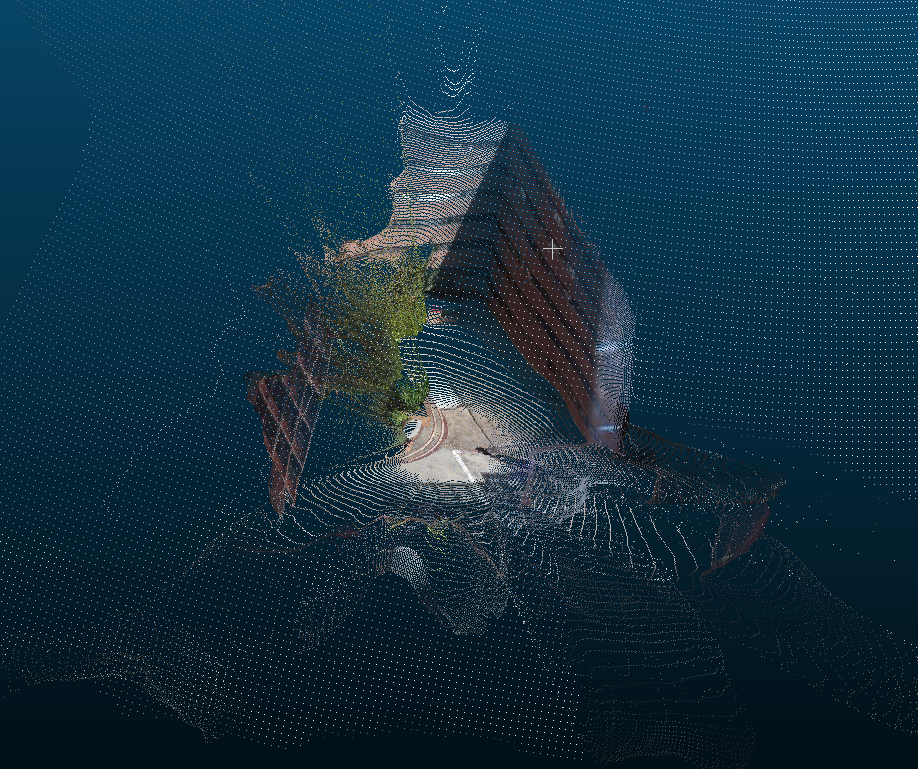

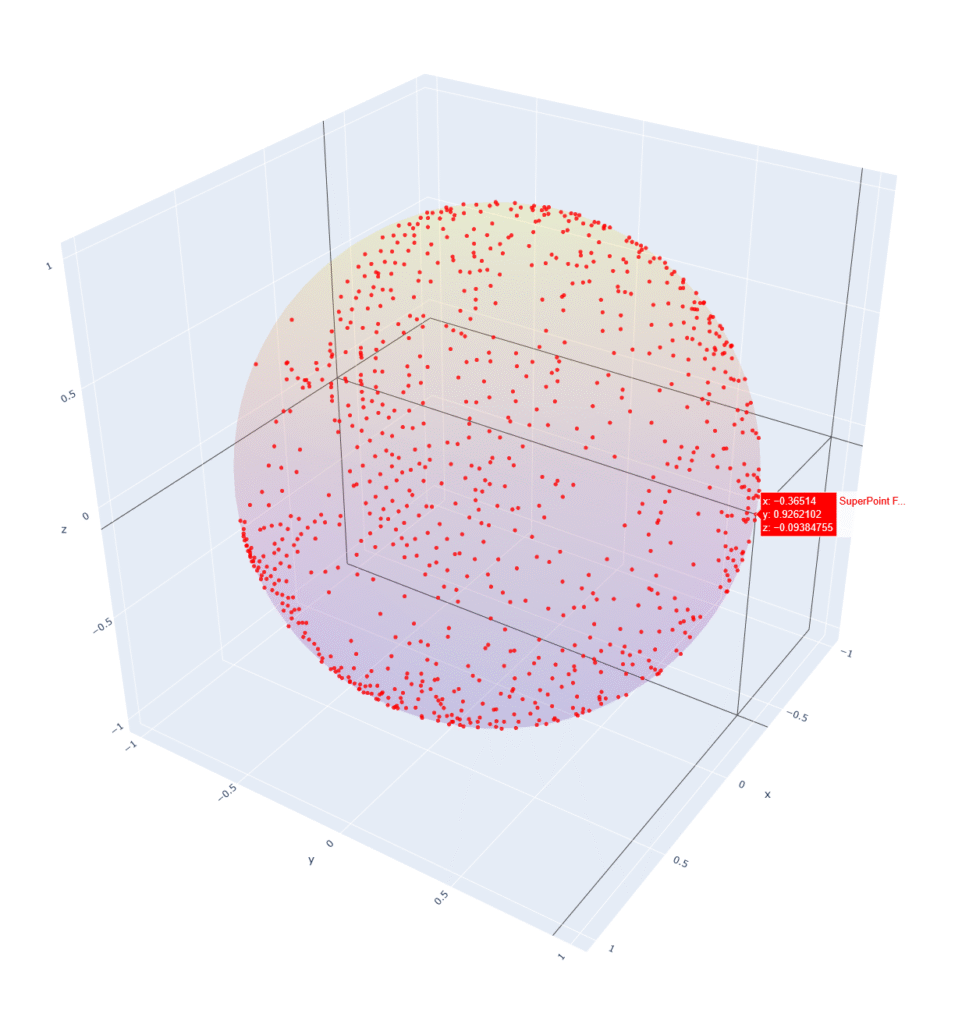

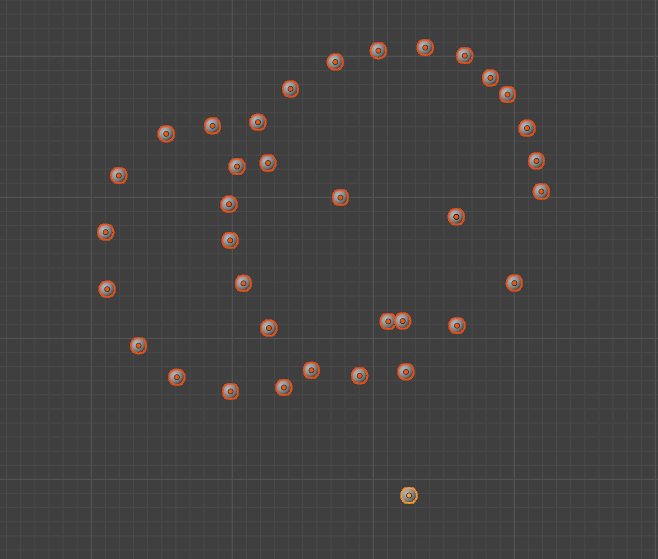

I moved on from commercial solutions and began looking at libraries. One workflow involved SuperPoint, SphereGlue, and OpenMVG. It first took each 360 photo and identified the important areas on its surface. It then sought to match those areas across the other 360 photos. This worked surprisingly well for a first attempt, getting 15/37 photos correctly oriented.

Getting the following 22 photo positions was much harder. The original output used a process that attempts to triangulate positions based on multiple photos. While the 22 photos may share much overlap, the points reviewed were not sufficient for the algorithm to pair them with the other 15 photos and they were dropped.

This will likely be the case for sparse photo sets indoors where each image is only guaranteed 1 line of sight to another image. To overcome this I experimented with a chain where images were oriented sequentially. This ensured all images would be positioned. This came at the cost of quality. Since the positions aren’t all compared to each other the distance between images is harder to calculate consistently. This appears to lead to overlapping images and tangents at the start or end of the chain. Rather than a proper series of two circles the output was consistently one circle with another either stretched, overlayed, or including a straight tangent line.

The quality output received from Matterport’s system indicates that a meaningful reconstruction can be performed on data as sparse as mine. The varying results delivered by the other tools confirm that sparse data comes with unique qualities that if not addressed lead to missing or misplaced cameras. I will continue to identify these qualities and review other libraries towards a solution.

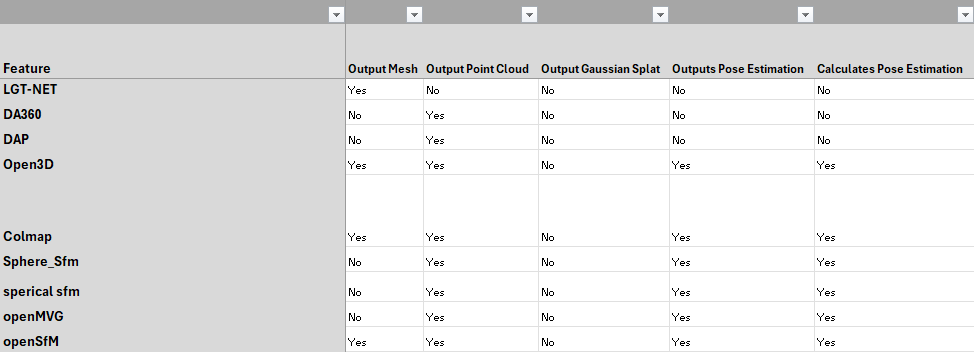

360 to 3D Tool Matrix

Inputting 360 photos and outputting a 3D model can have vastly different outcomes depending on the steps taken in between. The industry is wide and new. This leads to many different takes on how to perform the process. Through continued exposure to this environment I have begun to identify a variety of important qualities in assessing the tools. I decided to build a matrix.

This matrix includes information like license, commercial availability, purpose, and if it’s outdated or replaced by another tool. This is especially important in assessing the overall viability of a workflow, and keeping track of the tools that have been tested. With over 90 tools there is a lot to keep track of!

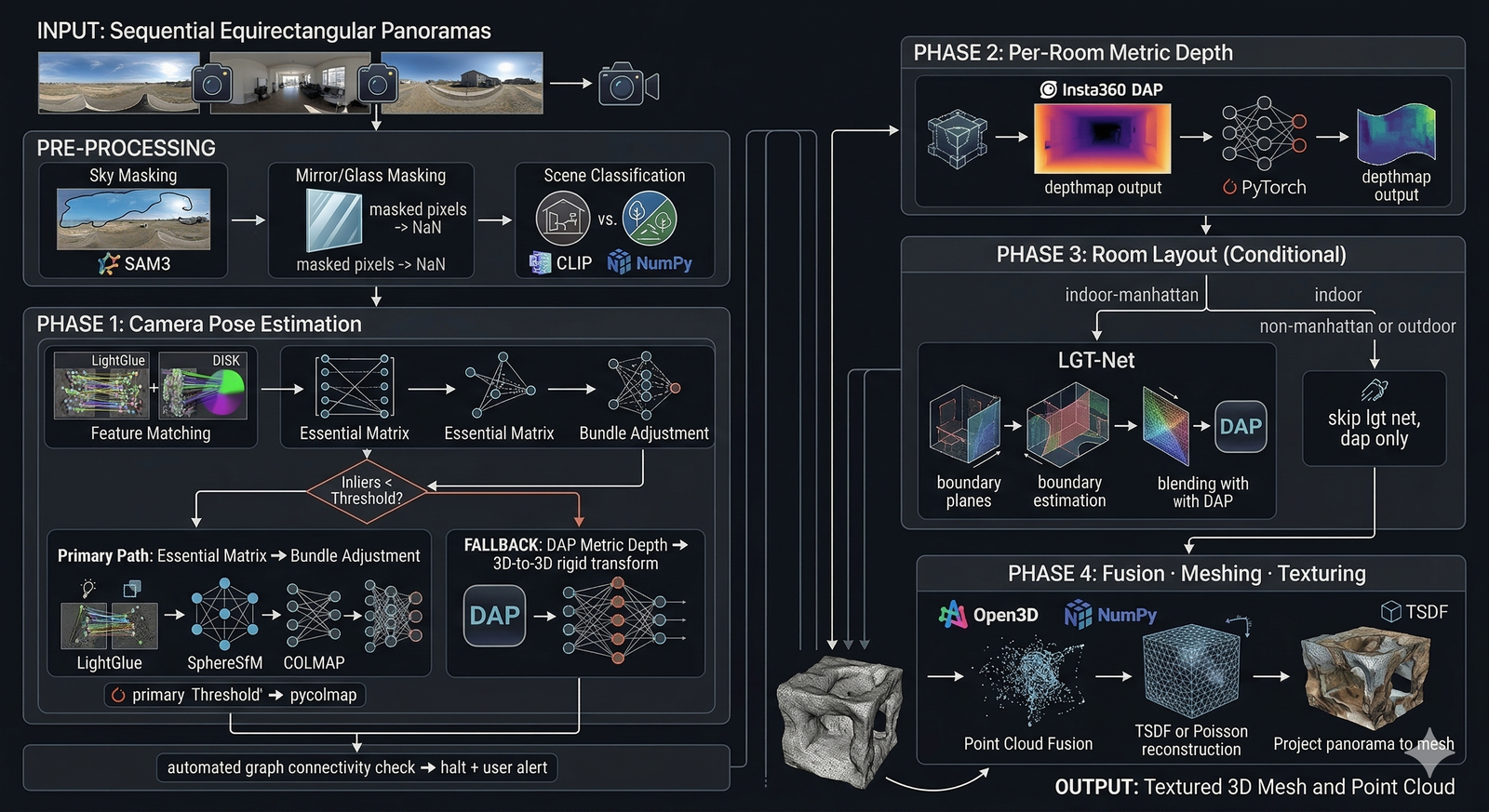

360-to-3D Reconstruction Architecture

Understanding the goal, our requirements, and the limitations of the available tools are key to laying out a successful roadmap.

Goal:

Input 360 equirectangular images from a sparse dataset and generate a navigable digital twin.

Requirements:

- Indoor and outdoor images accepted.

- Must work even with only single line of sight to another camera. Even through doorways. (Ex: One image in the hallway, one in the bathroom.)

- Must work even when rooms are repeated. (Ex: hotel or dorm may have two identical rooms.)

- Mirrors and reflections should appear flat and not hallucinate rooms beyond the plane.

- Should work with non-standard layouts like curved glass walls, large rooms and open spaces.

Tools:

- Segment Anything Model (SAM) 3: Identify segments based on context like “mirror, window, sky”

- LightGlue: Identify features and match them across images.

- SphereSFM – COLMAP: Uses matched features to construct structure from motion and deliver camera pose estimation.

- Depth Anything Panorama (DAP): Generate depthmap from equirect photos.

- LGET-NET: Room layout estimation from 360 equirect images.

- Open3D: 3D Point Cloud Reconstruction

- Truncated Signed Distance Function (TSDF): 3D Mesh generation and texturing.

The goal appears straightforward, input 360 photos and output a 3D mesh. While a human is likely to understand the room-to-room layout, it takes a lot of calculation for a computer to understand the same thing. With these tools, most of the required calculations should be ready in a usable state. Now the next step would be bridging their capabilities and testing.

GMAT Study Plan

As a lifelong learner I am always looking for ways to grow and further educate myself. Through years of employment, I have contributed to a variety of organizations. Through this I witnessed systems and decisions that would lead to success, and other times great hardship, for the members of the organization. I have taken courses, read books, and engaged in groups to develop my decision making skills and ability to cooperate and find win/win solutions. I have decided to apply to attend an masters in business administration (MBA) program, wherein the curricula are designed to improve my ability to take on responsibilities and lead my team, and all stakeholders, more often towards success.

To apply for an MBA program I must take the Graduate Management Admission Test (GMAT). I have not taken a standardized test in about half a decade. I took an evaluation and some of my skills were surprisingly good, with others needing improvement. I decided to take the advice of the study guide and pick a day for my test. Then I gathered the tools I had available for practice questions and practice tests. With my resources ready and date in mind I began filling out an excel spreadsheet. This evenly spreads out studying across the time I have to complete it. It follows the S.M.A.R.T. criteria (specific, measurable, achievable, relevant, time bound). I chose to add a series of rewards as well. This “carrot on the stick” is often tasty meals I get infrequently (like hot pot), and will hopefully encourage me through the more challenging weeks.

Summary

This week I continued testing opportunities to identify camera positions in 3D space. From there I outlined what I’ve learned so far about the environment and tools available for 360 -> 3D pipelines. With this knowledge, I was able to conceive the first draft of a locally available option for 3D reconstruction from sparse equirectangular images. And beyond this process I outlined my plan of study for taking the GMAT exams. This week expands upon last week’s theme of “Research” by taking the information we’ve found and beginning to format it into the structure of a solution.